Moore's Law for Quantum Computers

2011-09-07

By looking at the history of quantum computing experiments, one finds an exponential increase in the number of qubits, similar to Moore's law for classical computers. Quantum computing power doubles about every six years, with quantum computers for real applications arriving in between nine and twelve years if this trend continues.

|

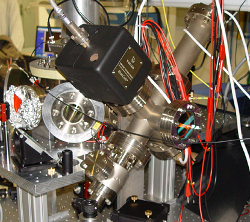

Ion trap quantum computer experiment in Innsbruck (photo by Markus Nolf) |

The challenge of scalability in quantum computing remains an area of vivid speculation in discussions both within the scientific community and beyond it. Yet, to my knowledge, nobody has ever made an effort to quantify the track record of the field. Such an empirical analysis allows to make predictions that go beyond the usual saying that practical quantum computers are "twenty years away".

Of course, if we wish to quantify things, we first have to specify the rules of the game. Since we are interested in scalability, the natural quantity of interest is the number of qubits realized in an experiment. Second, experiments should demonstrate controlled coherent manipulation of individual quantum objects, such as multi-qubit gates or generation of mutual entanglement. This rules out, for example, liquid state NMR ensemble realizations done with pseudopure states, such as Ike Chuang's remarkable seven-qubit prime factorization experiment. Finally, "controlled manipulation" implies that entanglement is not merely generated as a natural process, otherwise the Bell experiments going back to Clauser in 1972 would count as a two-qubit quantum computer. Alternatively, we could also say that we look only at experiments with at least three qubits, as this is where the real business starts. In any case, it is perfectly fine to use such natural events as a resource for creating higher order entangled states, as it is done in linear optics quantum computers. While there is certainly some ambiguity with these definitions, they have only little impact on the results.

Having established the rules to play by, the first experiment fulfilling the criteria is the demonstration of the Cirac-Zoller gate for two trapped ions by Dave Wineland's group at NIST in 1995. In the following, the number of qubits grew rapidly over the years, with ion trap experiments and linear optics implementations competing for the top spot. Currently, the world record in mutual entanglement is at 14 qubits, demonstrated last year by Rainer Blatt's ion trap group in Innsbruck. If we plot the progression of these records over time, we obtain the following plot (if your browser supports SVG, clicking on a data point will direct you to the respective publication):

As the qubit scale is logarithmic, this clearly corresponds to an exponential increase, similar to Moore's law for classical computers. The blue line is a fit to the data, indicating a doubling of the number of qubits every 5.7±0.4 years. We can now use this exponential fit to make some very interesting predictions.

Of course, we would like to know when we can expect quantum computers to become superior to classical computers, at least for some problems. The first real applications of quantum computers will come in the area of simulating difficult quantum many-body problems arising, for example, in high-temperature superconductivity, quark bound states such as proton and neutrons, or quantum magnets. For these problems, the record for classical simulations is currently at 42 qubits. While classical computers will see future improvements as well, a conservative estimate is that you need to control 50 qubits in your quantum computer to beat classical simulations. Extrapolating from our exponential fit, we can expect this to happen between 2020 and 2023. Optimization and search problems benefiting from Grover's algorithm could become tractable somewhat later, but that depends a lot on the problem at hand. If you are bold enough to believe that the same scaling continues even further, 2048-bit RSA keys would come under attack somewhere between 2052 and 2059.

So, is this really going to happen? The current experiments are limited by technical error sources, and theorists like myself are constantly developing new ideas on how to deal with the fundamental problems. And even if the current designs turn out to be not scalable beyond a certain size, other architectures based on diamond defect centers or superconducting circuits are rapidly catching up. My personal take is that the current exponential increase could certainly last for another decade, making the estimate on reaching 50 qubits by then sound realistic. However, whether the quantum version of Moore's law will last as long as its classical counterpart, is something that only time will tell.

Copyright 2006--2011 Hendrik Weimer. This document is available under the terms of the GNU Free Documentation License. See the licensing terms for further details.